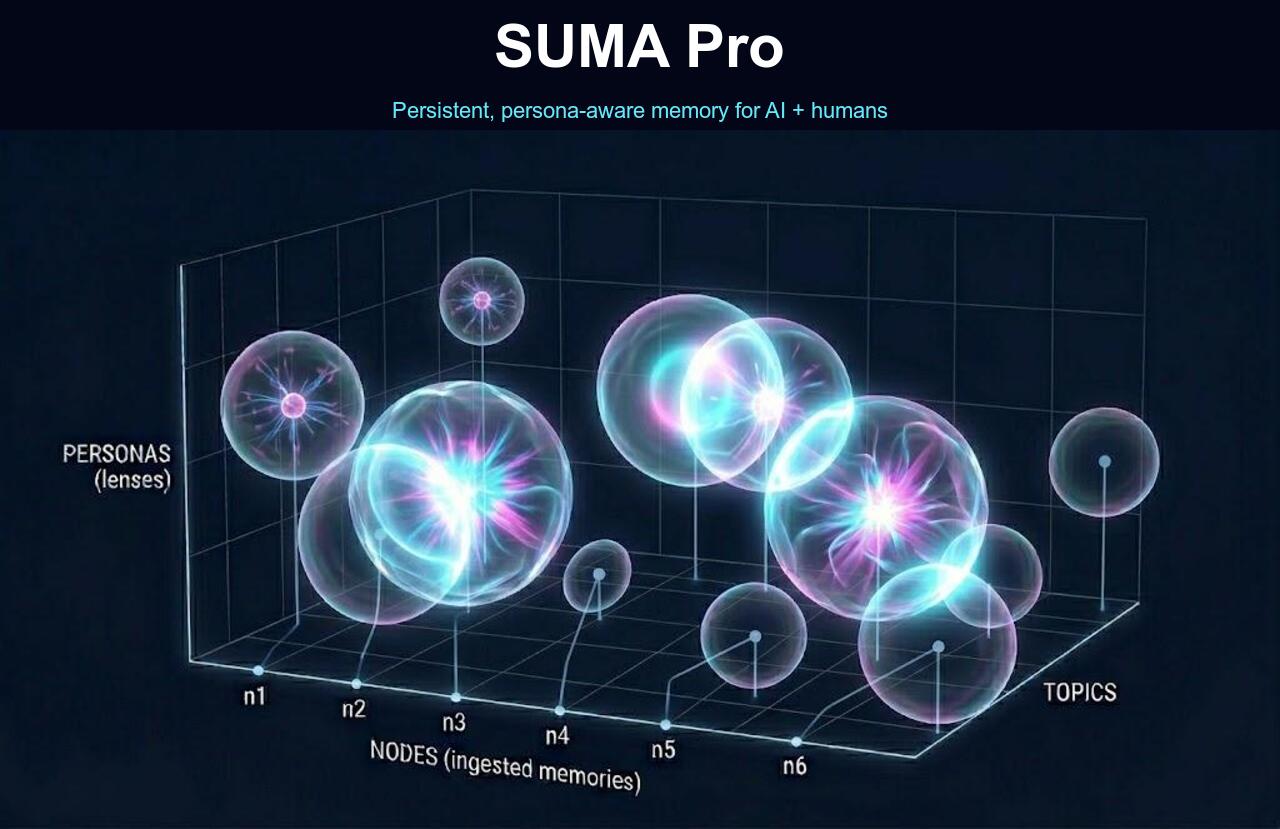

Memory in 3D.

Each sphere is one ingested memory. Spheres overlap where the same fact is visible to multiple personas. Same memory, multiple lens-views.

X = nodes (ingested memories) · Y = personas (lenses) · Z = Topics / Sub Topics. Each sphere's volume = its weighted reach across personas + topics + subtopics.